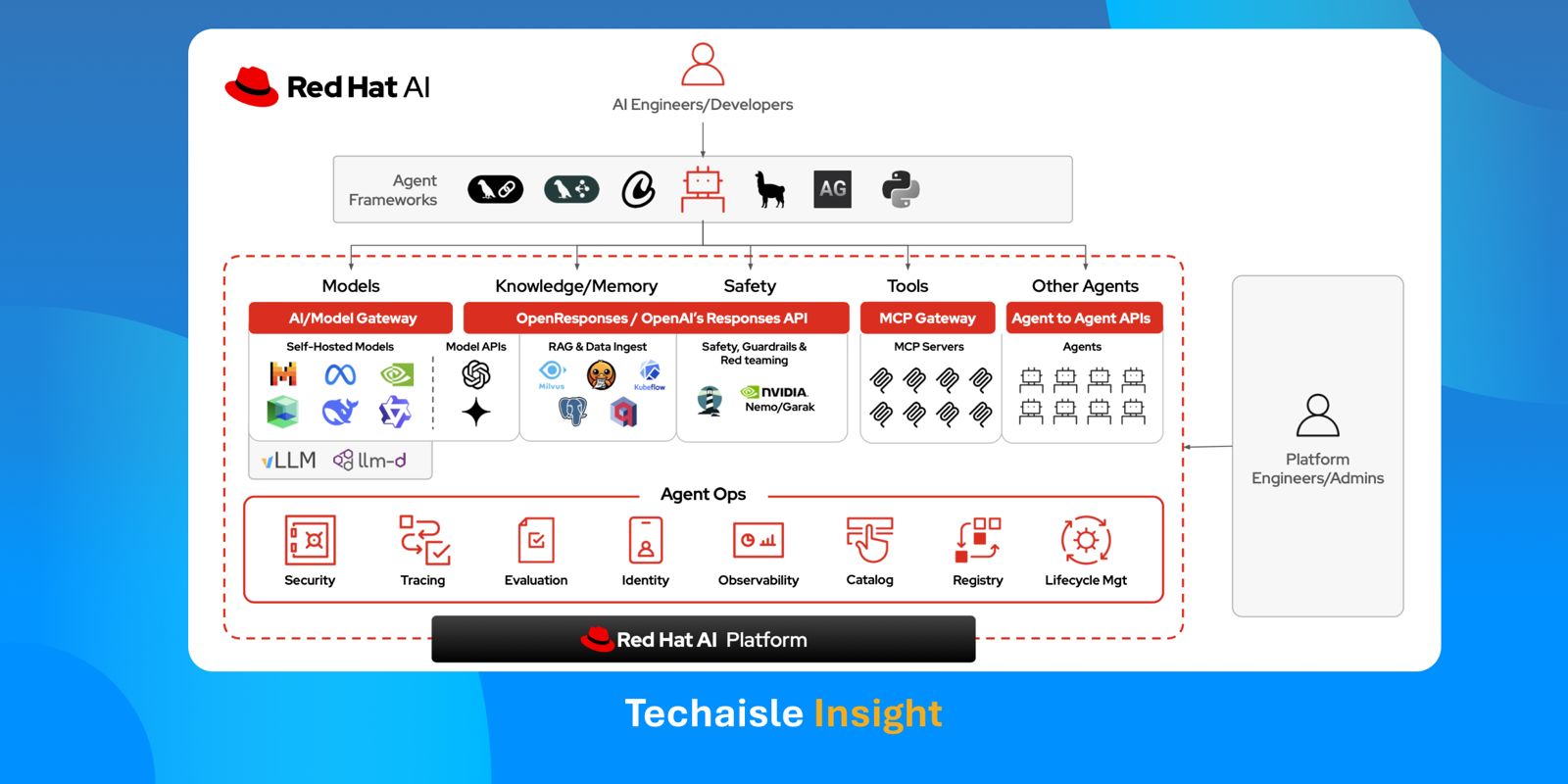

Red Hat is fundamentally rewiring the way enterprise and midmarket organizations deploy Agentic AI. Rather than joining the crowded, highly commoditized race to build the smartest foundation model or the most clever standalone agent, Red Hat is aggressively architecting the underlying "metal-to-agent" infrastructure to deploy and manage agents across a hybrid cloud environment. It is actively building the secure, governed, and predictable execution environment necessary to move AI from experimental sandboxes to production hybrid clouds. By refusing to engage in the volatile framework wars - declaring strict agnosticism about whether a customer builds an agent using OpenAI-compatible APIs or customized open-source models - Red Hat positions itself as the universal enabler. It is providing the fundamental API foundation, the deployment mechanisms, and the non-negotiable operational guardrails required to run any agent in a production environment.

The Era of Constrained Autonomy

This pragmatic infrastructure play arrives exactly as the business artificial intelligence narrative faces a massive reality check. The market is moving past the conversational parlor tricks of LLMs and rapidly entering the era of Agentic AI. However, as the focus shifts toward systems capable of reasoning, multi-step planning, and independent execution, businesses are slamming into a formidable wall of operational and compliance risk. It is one thing for an AI model to draft an email; it is an entirely different risk paradigm for an autonomous agent to access production databases, negotiate with other microservices, and independently execute infrastructure configuration changes. Unconstrained AI autonomy, lacking accountability and auditability, is not an asset; it is a critical operational liability. The winning narrative for the next 12 to 18 months hinges on what I call "constrained autonomy" - a concept Red Hat completely aligns with, building its strategy around the principles of being "autonomous with responsibility" and "autonomous with safety".