Wall Street Journal carried an article on how regulatory burdens had made community banks “too small to succeed” despite performing better than larger banks regardless of being better capitalized and having lower default rates.

The advent of cloud technologies has the potential to change WSJ’s dire prognosis.

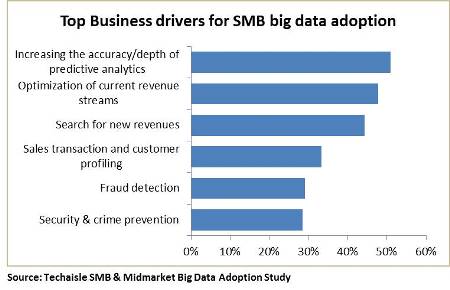

Cloud may have first been introduced as a means of reducing CAPEX and/or overall IT costs, but today, it is viewed by small and midmarket businesses as a means of increasing business agility and of introducing capabilities that would have been cost or time-prohibitive to deploy on traditional technology. Complementary to cloud, big data analytics presents the possibilities of connecting together a variety of data sets from disconnected sources to produce business insights whether for increasing sales, improving products or detecting fraud. SMB banks are a specific segment of SMBs who can derive the benefits of customer insight while meeting their mandatory regulatory requirements.

Techaisle classifies SMB banks as those below $10B in assets and medium sized banks as those between $10-100B in assets. SMB banks below $10B in assets often called “community banks” play a very important role in the ecosystem of SMB businesses. Although FDIC, OCC and FRB have different definitions of community banks, it is important to note that these smaller banks not only accounted for nearly half of the total of about $600B outstanding small business loans at the end of 2014 but also play a disproportionately major role in the $1.8 trillion residential mortgage origination market.

Unlike large banks, SMB banks are characterized by George Bailey in “It’s a Wonderful Life”. These banks usually have keen insights on their customers based on personal relationships and carry a tremendous amount of tribal knowledge about their customers which they use to make business decisions. While this corpus of knowledge may not be codified it does make a difference in their business operations. But is that enough in today’s hyper-competitive economy where the relationship is being increasingly controlled and dictated by customers?

Then there is another question, are these smaller banks doing enough to detect fraud? High-risk businesses that have been denied services by large banks tend to move their business to smaller banks who are less equipped to analyze these risks. These smaller banks are unknowingly exposing themselves to fraud as well as compliance risk. Regulations are agnostic to bank size and equally unforgiving of SMB banks as they are of large banks. A cloud-based analytics solution may just be the recipe for success for the smaller banks. In fact, these banks are no different than midmarket businesses (or even small businesses) in their objectives of adopting big data.

Monitoring, analyzing and reporting very large volumes of data are typically the largest components of regulatory costs for SMB banks. Many often use antiquated technology and manual processes to manage their compliance requirements. Banks that are able to automate the process of managing data for regulatory requirements can have the added benefit of getting a unique view of their customers through one single technology solution.

According to Shirish Netke CEO, Amberoon, a provider of Big Data solutions for banks, “A lot of the data that is required for regulatory compliance can also be easily parlayed into getting insights on the banks customers and improving business”. Amberoon has built a banking solution for SMB banks provisioned on the IBM SoftLayer cloud.

Security & privacy (especially FFIEC requirements), traditional inhibitors of cloud adoption, are a legitimate concern for banks. After all, banks are the custodians of individual’s money, facilitators of trade and commerce and life-line of businesses. However, it may be argued that these inhibitors have already been successfully addressed by service bureaus. A very large percent of SMB banks outsource their core banking system to service providers such as Fiserv and FIS Global who have built very large scalable service bureaus with the economies of scale afforded by centralizing technology resources.

Aptly put by Noor Menai, CEO of CTBC Bank. “Outsourced technology services are nothing new in the banking industry. There is a compelling reason to use big data technologies in banks if they are available at an affordable cost in a secure manner. Cloud has the potential to provide both”.

Big data analytics in the cloud can be an execution advantage, and may even propel the SMB banks to leap ahead of larger banks on solutions that address both regulatory necessities as well as gain competitive edge from customer analytics. Historically, Siebel, an on-premise solution, was usually deployed in large enterprises and was out of reach for smaller businesses. Salesforce, a cloud solution, changed the perception, adoption, usage, affordability and provided immediate business outcomes. Today Salesforce is used by both SMBs as well as large enterprises.

Combining the benefits of cloud with the advantages of big data analytics may just be the prescription that SMB banks need for business growth (cross-selling, upselling services), meeting regulatory requirements such as KYC/AML/BSA and deep-diving into fraud detection.

One should also not forget that big data implementations require a unique combination of technical, operational and business skills to be used in a sustained manner. Needless to say, these skills are in short-supply but affordable by deep-pocketed larger banks. While some smaller banks including community banks can spend the money to experiment with big data pilots, they do not have the capacity to go through expensive iterations to get it right. While larger banks have the luxury of choosing between on-premise big data versus cloud big data, for smaller banks the choice could very well be between either doing big data on the cloud or perhaps not doing it at all. The remaining question therefore is – which big data cloud supplier will take the lead in educating, evangelizing and then executing on the needs of SMB banks.

In the world of business most predictive analytical tools are quantitative where numeric data is used for building an input-output model. The output is the prediction for specific inputs. For example: A 10% increase in advertising in January will result in an increase of 1% sale in May is a typical output from predictive analytics.

In the world of business most predictive analytical tools are quantitative where numeric data is used for building an input-output model. The output is the prediction for specific inputs. For example: A 10% increase in advertising in January will result in an increase of 1% sale in May is a typical output from predictive analytics.