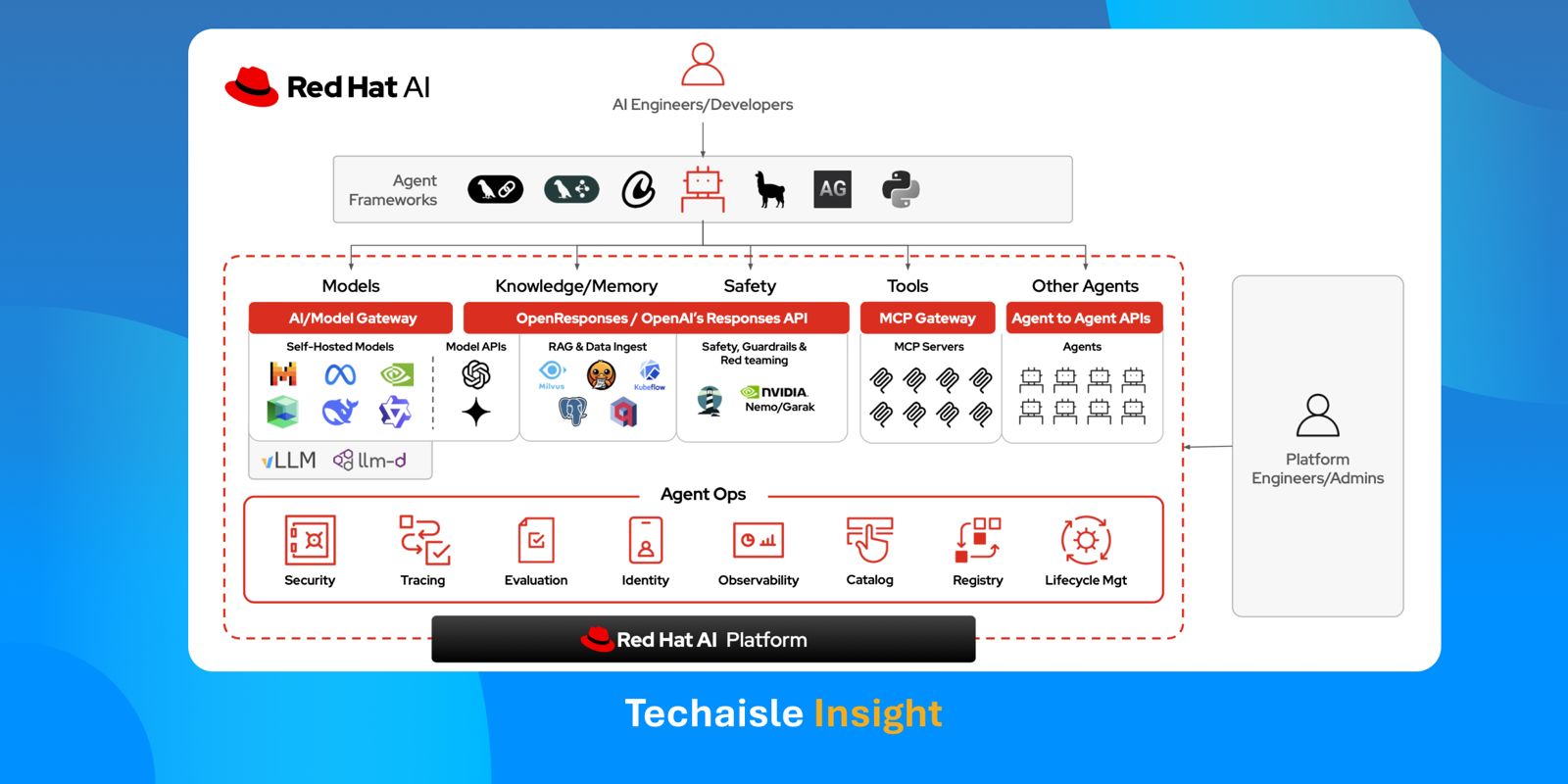

Red Hat is fundamentally rewiring the way enterprise and midmarket organizations deploy Agentic AI. Rather than joining the crowded, highly commoditized race to build the smartest foundation model or the most clever standalone agent, Red Hat is aggressively architecting the underlying "metal-to-agent" infrastructure to deploy and manage agents across a hybrid cloud environment. It is actively building the secure, governed, and predictable execution environment necessary to move AI from experimental sandboxes to production hybrid clouds. By refusing to engage in the volatile framework wars - declaring strict agnosticism about whether a customer builds an agent using OpenAI-compatible APIs or customized open-source models - Red Hat positions itself as the universal enabler. It is providing the fundamental API foundation, the deployment mechanisms, and the non-negotiable operational guardrails required to run any agent in a production environment.

The Era of Constrained Autonomy

This pragmatic infrastructure play arrives exactly as the business artificial intelligence narrative faces a massive reality check. The market is moving past the conversational parlor tricks of LLMs and rapidly entering the era of Agentic AI. However, as the focus shifts toward systems capable of reasoning, multi-step planning, and independent execution, businesses are slamming into a formidable wall of operational and compliance risk. It is one thing for an AI model to draft an email; it is an entirely different risk paradigm for an autonomous agent to access production databases, negotiate with other microservices, and independently execute infrastructure configuration changes. Unconstrained AI autonomy, lacking accountability and auditability, is not an asset; it is a critical operational liability. The winning narrative for the next 12 to 18 months hinges on what I call "constrained autonomy" - a concept Red Hat completely aligns with, building its strategy around the principles of being "autonomous with responsibility" and "autonomous with safety".

Nowhere is this requirement for constrained autonomy more acute than within highly regulated verticals. Consider financial services or healthcare: an autonomous agent tasked with analyzing real-time patient telemetry or executing compliance-bound financial transactions operates under zero-tolerance regulatory mandates. In these sectors, an unverified hallucination is a catastrophic liability. By allowing organizations to anchor agent execution within localized, air-gapped OpenShift environments, Red Hat provides the non-negotiable infrastructural scaffolding required. It enables enterprises to confidently deploy specialized, vertical-specific agents on highly sensitive proprietary data without risking exposure to the unpredictable compliance gaps of public, multi-tenant models.

Beyond MLOps: The Rise of AgentOps

To enforce this constrained autonomy, Red Hat is initiating a formalized pivot toward "AgentOps". While the broader market has barely begun to digest MLOps, Red Hat is already evolving the DevOps layer to handle the unique complexities of AI agents. The fundamental distinction here is vital: traditional DevOps is designed to manage deterministic code, and MLOps is designed to manage relatively static model weights and predictable inference outputs. AgentOps, conversely, faces the unprecedented challenge of governing non-deterministic behavior. When an AI agent dynamically selects its own tools and alters its execution path based on real-time environmental observations, traditional rollback and version control mechanisms completely break down.

Red Hat is tackling this complex non-determinism by building an immutable, cryptographically secure chain of custody. By creating a system of governed AI assets - tying specific agents to precise container versions housed in a model registry today, with an agent registry planned for the second half of this year - and tracing every single action or Model Context Protocol (MCP) tool call through an evaluation hub powered by observability platforms like MLflow, it is making an agent's logic trajectory fully auditable. Because Red Hat is standardizing strictly on OpenTelemetry and its generative-AI semantic conventions rather than proprietary formats, if an agent makes a critical error in production, the system does not merely catch the surface-level failure. It connects the entire trace back to the exact immutable version of the agent and the specific tools it utilized, enabling surgical fixes and automated continuous integration pipelines.

Zero Trust and the Agentic Service Mesh

Where Red Hat truly leverages its historical strategic moat is in the networking and security layers of multi-agent systems. The future state of business AI is not a single, monolithic agent handling all tasks; it is a complex swarm of highly specialized, competing, and collaborating agents. Managing this distributed intelligence requires an "Agentic Service Mesh". Red Hat is answering this exact need by repurposing its battle-tested cloud-native primitives for agentic workloads. It is utilizing Istio to handle east-west traffic and negotiation between agents inside the cluster, while deploying Envoy (via Gateway API) as the north-south gateway with Authorino as the authorization engine to govern external tool access.

More impressively, Red Hat is deploying SPIFFE and SPIRE - technologies traditionally used for microservice security - to assign cryptographic workload identities to AI agents. Combined with granular token exchange, which ensures an agent only receives a tightly scoped token valid for a specific, approved tool, Red Hat is actively laying the groundwork for a true Zero Trust AI environment. If an autonomous agent goes rogue or attempts an escalating series of unapproved API calls, the gateway validates those actions against its scoped identity, effectively containing the blast radius. Furthermore, by exploring Istio's ambient mode and node-level components to handle token exchange, Red Hat is working to mitigate the massive latency overhead that would normally cripple high-velocity agent reasoning loops if a sidecar had to be injected into every single agent container.

Of course, AI reasoning is operationally useless without the ability to act on the underlying environment. While agents reason, they must hand off execution to systems that rigorously respect business governance. This is where Red Hat positions Ansible as an additional, critical, deterministic circuit breaker for infrastructure beyond the cluster . While an agent might analyze log data and independently recommend a configuration change to solve a platform issue, Ansible enforces immutable policies and ensures a human remains in the loop before massive-scale execution occurs on mission-critical servers. On the cluster itself, Red Hat AI governs agent execution natively, with access controls, gateways, and human-in-the-loop capabilities like the Responses API, but the broader infrastructure state remains tightly governed.

With this secure infrastructure layer established, the strategic value flows directly from IT operations to the business process architect. Red Hat's "metal-to-agent" foundation essentially serves as the catalyst for next-generation workflow automation. Rather than relying on the rigid, deterministic constraints of traditional Robotic Process Automation (RPA), process architects can now safely design dynamic, multi-agent orchestrations. They can deploy specialized agents to autonomously handle unstructured workflow exceptions, negotiate cross-departmental approvals, and route complex decisions - acting with the absolute certainty that the underlying Agentic Service Mesh and Ansible's deterministic circuit breakers will enforce strict operational guardrails.

Modular Openness vs. Tightly Coupled Walled Gardens

To truly understand Red Hat’s market positioning, this strategy must be contrasted with the broader infrastructure ecosystem, most notably VMware (Broadcom). VMware is pushing a highly bundled, turnkey narrative by making Private AI a standard, tightly coupled component of VMware Cloud Foundation (VCF) 9.0. While VMware's out-of-the-box integration appeals to organizations seeking a single-vendor walled garden, it implicitly risks hardware and framework lock-in.

Red Hat, conversely, is championing a decidedly modular, open-ecosystem philosophy. By decoupling the reasoning engine from the infrastructure - supporting OpenAI-compatible APIs alongside self-hosted, fine-tuned open models via vLLM - Red Hat ensures that customers retain architectural portability. Furthermore, within the broader IBM portfolio context, Red Hat is smartly delineating its execution boundaries. While Red Hat AI handles agentic reasoning, memory, and MCP routing, stateful infrastructure execution and complex cloud dependency management can be seamlessly handed off to sister-company tools like HashiCorp’s Terraform and Project Infragraph. This modularity prevents vendor lock-in, giving enterprises the flexibility to swap out foundation models as the market evolves while maintaining a consistent, secure AgentOps control plane.

The Partner Monetization Engine

This architectural approach has profound commercial implications across the market spectrum. For large enterprises and midmarket customers, the strategic message is clear: AI adoption has matured beyond the sole purview of the innovation lab; it is now firmly the domain of the Chief Information Security Officer (CISO). By translating the inherently chaotic and unpredictable concept of Agentic AI into the highly familiar language of Zero Trust, identity management, and auditability, Red Hat is systematically dismantling the primary blocker to business AI deployment. Risk-averse organizations can now safely deploy cutting-edge models directly on their private, air-gapped data without fear of the consequences of unchecked autonomy.

Equally important are the implications for the robust channel ecosystem required to serve these segments. Red Hat’s aggressive packaging of "AgentOps" is nothing short of a massive, structural monetization engine. Midmarket organizations simply do not possess the internal engineering depth to string together fragmented open-source AI frameworks with Envoy gateways, MLflow tracing, and SPIFFE identity architectures. They rely entirely on Managed Service Providers (MSPs) and System Integrators (SIs). Red Hat is essentially absorbing the daunting complexity of AgentOps and packaging it into repeatable reference architectures. This empowers channel partners to pivot toward offering highly lucrative "AgentOps-as-a-Service," driving sustained recurring revenue by monitoring, securing, and governing agentic fleets on behalf of their clients. Ultimately, Red Hat is not trying to compete in the exhausting race to build the smartest artificial brain; it is quietly and efficiently building the indispensable nervous system required for the business agentic era.